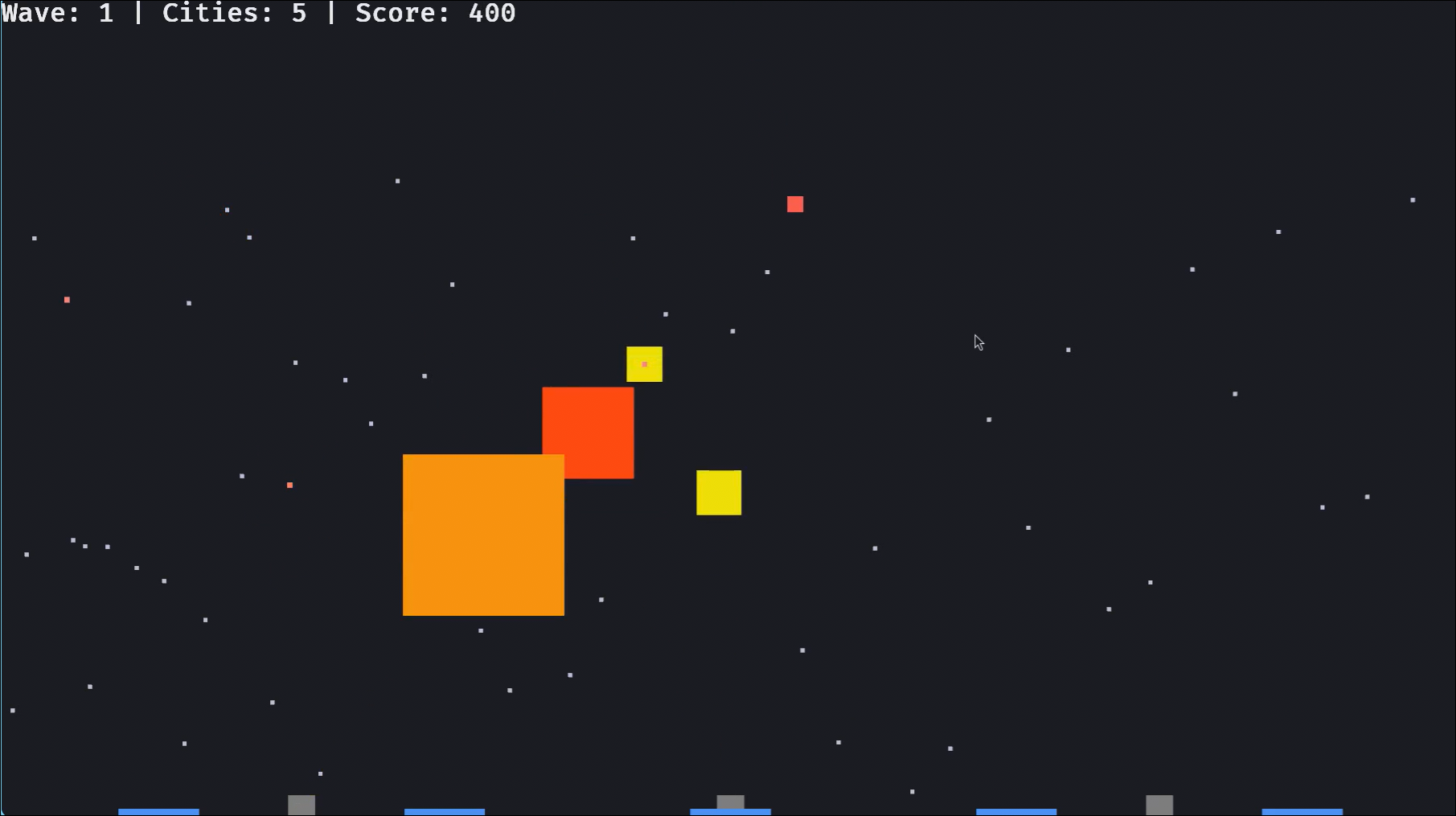

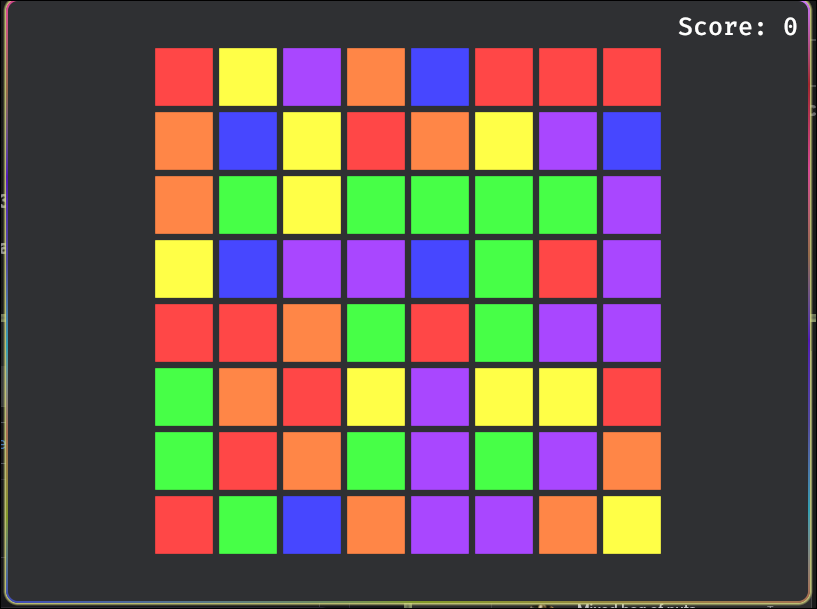

I wanted to try pointing Claude CLI to a local LLM and see how well it would work. My machine is running linux EndevourOS has the following specs: Monitor 3840x2160 in 27", 60 Hz [External] Monitor 3840x2160 in 32", 60 Hz [External] CPU 12th Gen Intel(R) Core(TM) i9-12900KF @5.20 GHz 52.0°C GPU AMD Radeon RX 9070 - 48.0°C [Discrete] G Driver amdgpu Vulkan 1.4.335 - radv [Mesa 26.0.5-arch1.1] Motherboard PRIME Z690M-PLUS D4 (Rev 1.xx) Bios 3811 (38.11) RAM ●●●● 23.94 GiB / 62.60 GiB (38%) I use llama-cpp to run the model locally. During this experiment I kept hitting the context limit. I bumped it pretty high but then again, I have plenty of memory. ...